For legal, PE, and enterprise teams

Claude is a chatbot. mixus runs your workflows.

Same AI under the hood. mixus wraps it in the playbooks, learnings, email handoffs, and org controls your firm actually runs on.

At-a-glance

What mixus delivers that Claude doesn’t.

10 capabilities compared

Delegated email with colleagues CC'd

Forward or CC agent@mixus.com. Replies land in the same thread.

Word redlines bound to your playbook

Every tracked change cites the firm rule it came from.

Deliverables built to your org's standards

Spreadsheets and docs follow firm templates, not generic output.

Shared playbooks with enforceable rules

Upload the firm playbook once. Every reviewer uses it.

Learns from accept / dismiss decisions

Rules sharpen as your team reviews, not one-off prompts.

Human-in-the-loop checkpoints

Agents halt at configured gates and wait for sign-off.

Long-running agents across steps

Runs persist. Artifacts pass to the next reviewer.

Cost per run in USD, with duration

Reconcile AI spend to the matter, like a time entry.

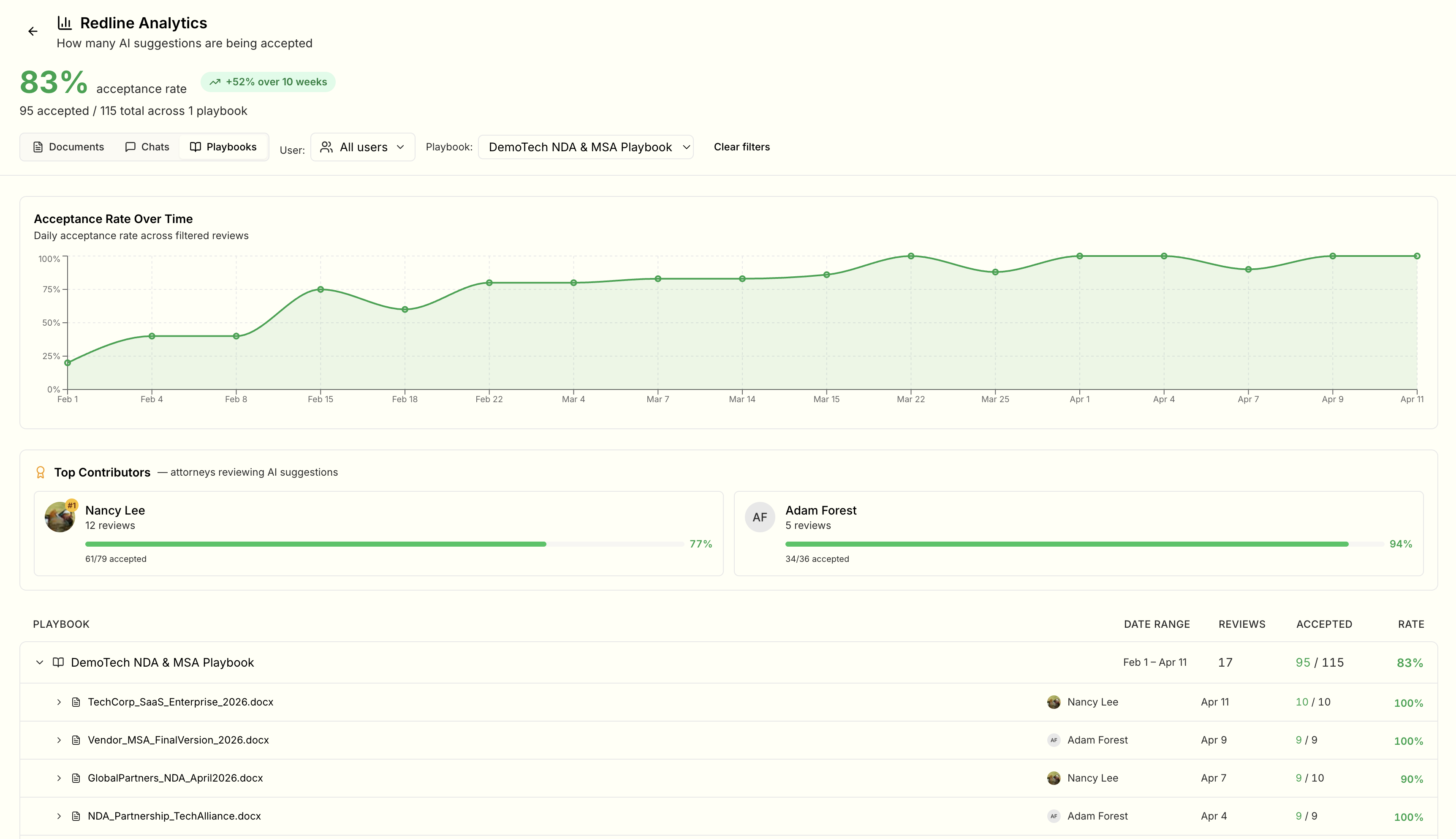

Org-wide analytics and admin

See who ran what, on which playbook, with what result.

Zero data retention + SOC 2 Type II

One vendor to evaluate. Nothing trains a model.

Where work happens

Delegated email with colleagues CC'd

Forward or CC agent@mixus.com. Replies land in the same thread.

ClaudemixusWord redlines bound to your playbook

Every tracked change cites the firm rule it came from.

ClaudePartialmixusDeliverables built to your org's standards

Spreadsheets and docs follow firm templates, not generic output.

ClaudePartialmixus

How work gets done

Shared playbooks with enforceable rules

Upload the firm playbook once. Every reviewer uses it.

ClaudemixusLearns from accept / dismiss decisions

Rules sharpen as your team reviews, not one-off prompts.

ClaudemixusHuman-in-the-loop checkpoints

Agents halt at configured gates and wait for sign-off.

ClaudemixusLong-running agents across steps

Runs persist. Artifacts pass to the next reviewer.

ClaudePartialmixus

Controls and trust

Cost per run in USD, with duration

Reconcile AI spend to the matter, like a time entry.

ClaudemixusOrg-wide analytics and admin

See who ran what, on which playbook, with what result.

ClaudePartialmixusZero data retention + SOC 2 Type II

One vendor to evaluate. Nothing trains a model.

ClaudePartialmixus

- SOC 2 Type II

- Zero data retention

- HIPAA attestation

- Anthropic, OpenAI, Google

Built for how your team works

Only on mixus.

Acme term sheet — please redline

Attached is the latest Acme term sheet. Please redline against our term sheet playbook and flag anything over our fallback on liquidation preferences. Alex and Jordan — looping you in.

Re: Acme term sheet — please redline

Redlined against Acme term sheet playbook — 12 suggestions applied. Flagged 2 fallback escalations on §4.2 (liquidation preferences) for your review.

Cost $0.42 · 1m 14s

01

CC the agent. Get the deliverable in the thread.

Forward or CC agent@mixus.com on the live thread. The agent picks up the full context, runs the redline, and replies with the .docx attached so everyone on the thread sees it together.

02

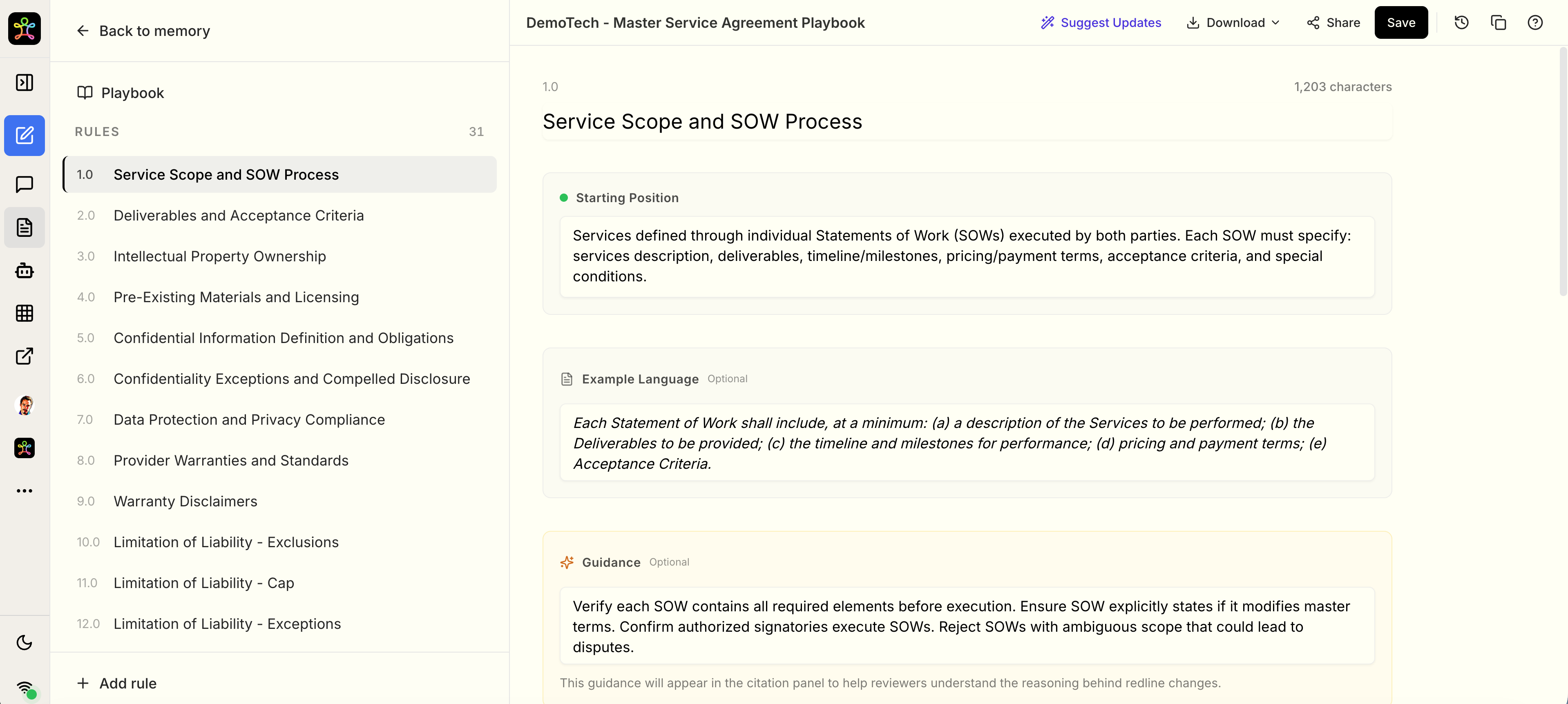

Your playbook becomes enforceable rules.

Drop in a PDF, DOCX, or past deal and mixus generates a structured playbook with starting, fallback, and unacceptable positions. Reviewers refine it over time through accept and dismiss signals.

03

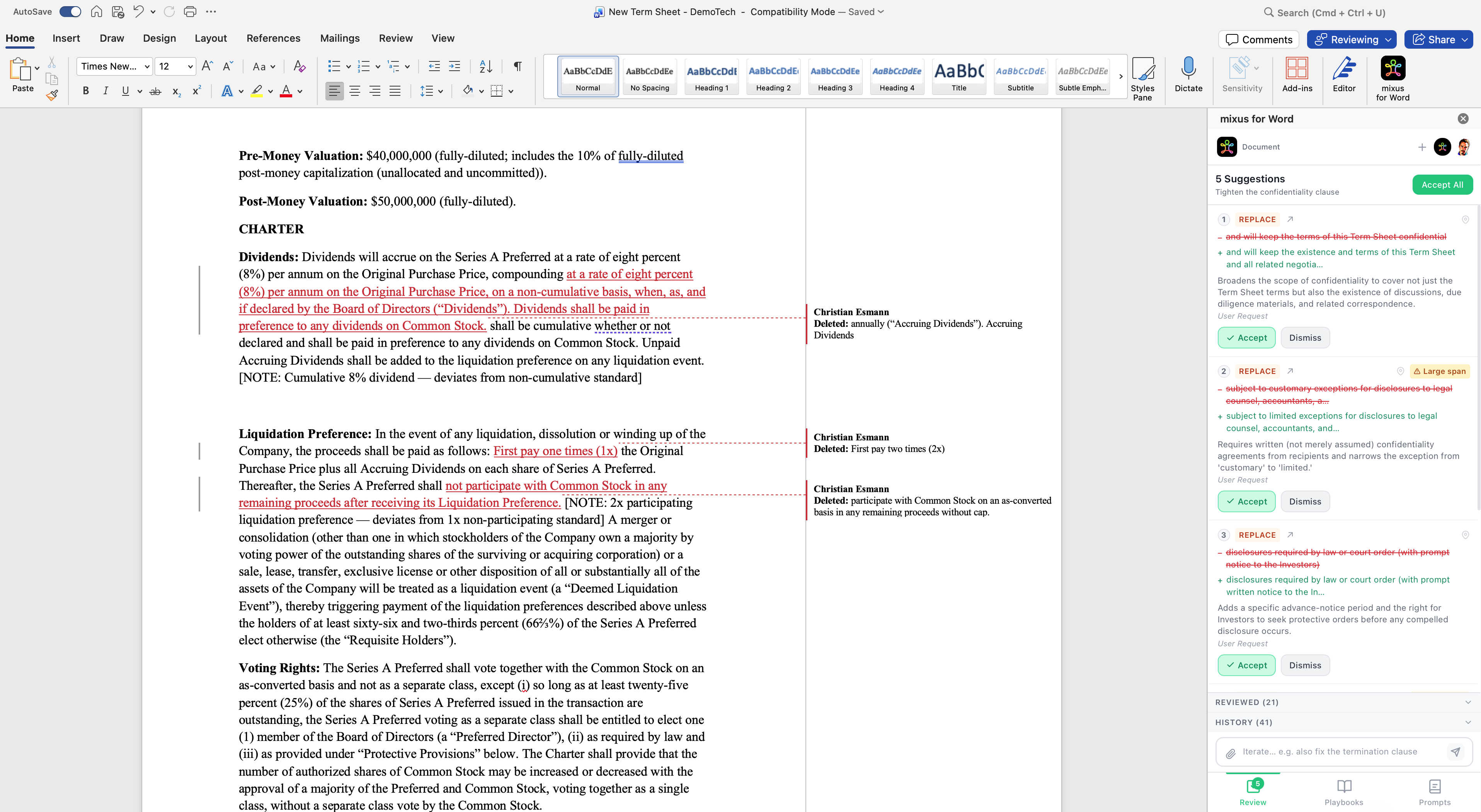

Word redlines that cite the rule, not just the AI.

Claude for Word makes tracked changes too. mixus's suggestions trace back to a specific playbook rule your firm defined, so reviewers know why every edit was proposed — and partners can audit it later.

04

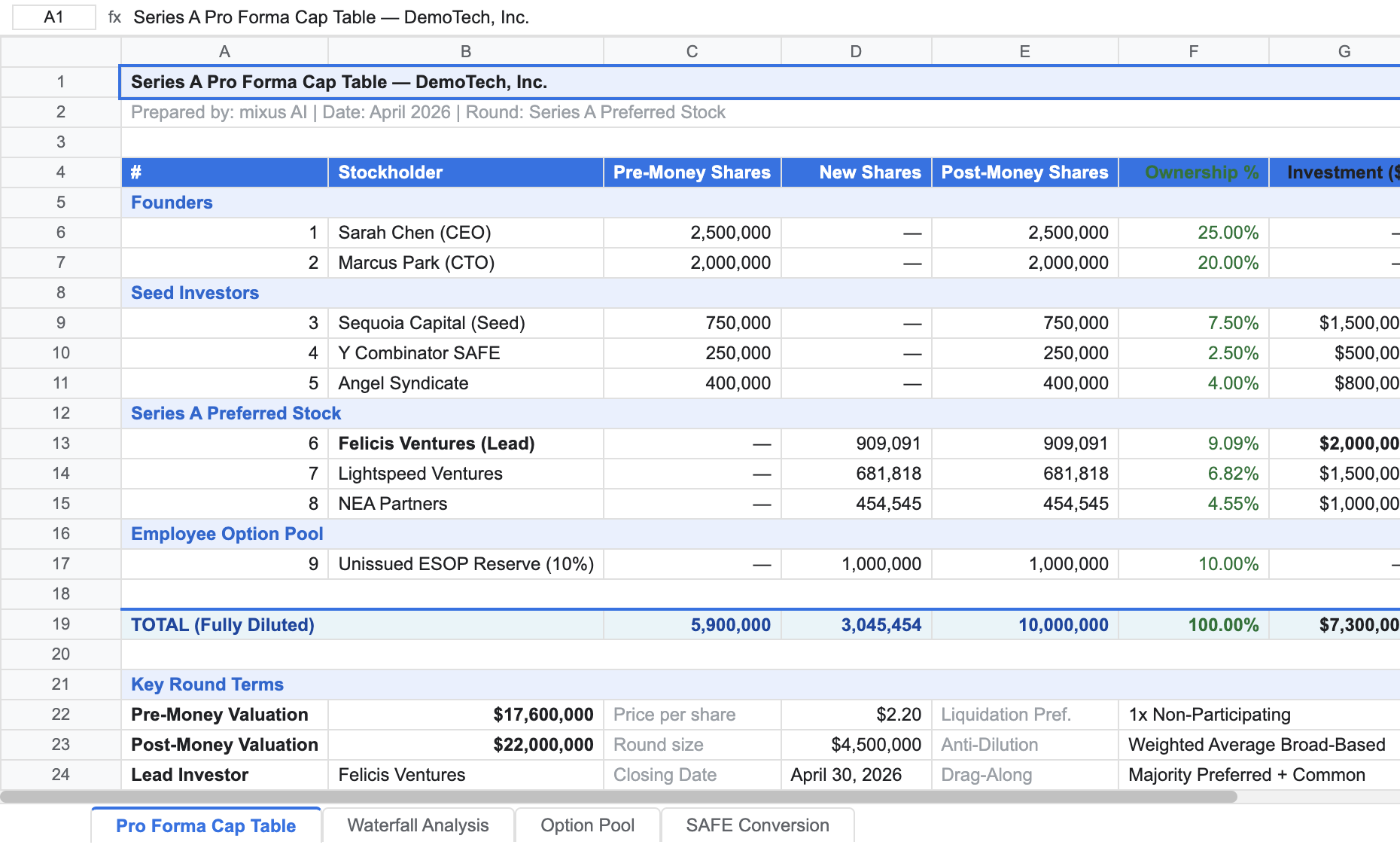

Working spreadsheets, not chat tables.

Long-running agents produce real .xlsx files with live formulas (SUM, cross-references, percentages) under your org formatting standards, with cost and duration per run.

05

Admin visibility the firm expects.

See adoption, acceptance rates trending over time, top contributors, and per-document review history across the organization. Partners and ops get one view.

Common questions

Answers before you ask.

Where does my client data live?

In mixus's SOC 2 Type II certified infrastructure, with HIPAA attestation. AI calls use Anthropic's zero data retention API, so prompts and outputs are never stored by the model provider and never used for training.How is this different from Claude for Word?

Claude for Word (beta, April 2026) makes tracked changes too. The difference is enforcement: mixus binds every redline to a specific playbook rule your firm defined, learns from reviewer accept/dismiss decisions over time, and gives partners org-wide analytics on how the playbook is being used. Claude for Word is a general assistant; mixus is a firm-wide review system.How is AI spend billed?

Cost per run in USD with the duration of each run, so finance and matter leads can reconcile AI time the way they track analyst time.What models run under the hood?

Anthropic, OpenAI, and Google. Redline pipelines use a validated allowlist. No single-vendor lock-in at the model layer.

For procurement and deep dives

See the full capability matrix

For procurement and deep dives

See the full capability matrix

Capability-level view

Last updated · Compared to Claude (Team / chat and related apps)

| Capability | Claude (typical) | Mixus |

|---|---|---|

| Surface area | ||

| Word redlines tied to firm playbook rules | Claude for Word (beta, April 2026) produces native tracked changes but applies general AI judgment, not firm-specific playbooks or learned rules. | Every tracked change cites the playbook rule that triggered it, and reviewer accept/dismiss signals refine rules across the firm. |

| How work is defined | ||

| Shared playbooks and reviewer training signals | Standards live in prompts, wikis, and reviewer habit; consistency is manual. | Playbooks and feedback loops are part of the product surface, with org-level visibility into how suggestions are accepted or revised over time. |

| Email & collaboration | ||

| Delegated email with thread context, CCs, rich attachments, and replies in-thread | Email is not the native control plane; teams re-type context into chat, lose the canonical thread, and juggle versions outside the inbox. | Forward or CC the agent on the live thread, attach the file types you already use, and get redlines, models, or other artifacts back in the same conversation so colleagues review together, no copy-paste relay. |

| Security & compliance | ||

| Zero data retention on AI layer; SOC 2 Type II, HIPAA | Anthropic states it does not train on Team/Enterprise data. Conversations are stored on Anthropic infrastructure; your compliance team evaluates Anthropic directly. | AI calls use Anthropic's zero data retention API. Client data stays in mixus's SOC 2 Type II certified infrastructure with HIPAA attestation. One vendor to evaluate. |

| Model flexibility (Anthropic, OpenAI, Google) | Claude only. Switching providers means switching products entirely. | Multiple AI models available; redline pipelines use validated model allowlists. No single-vendor lock-in at the model layer. |

| Production controls | ||

| Configured stops for human approval before the next agent step | You can ask the model to wait, but continuation is ultimately conversational. Easy to miss a gate unless someone watches the session. | Agent workflows can require explicit human approval signals and halt until recorded, then continue. Sensitive runs match firm escalation discipline. |

| Cost per run in USD, with duration visibility | Billing and usage are often aggregated at the workspace or subscription layer; mapping a single run to cost and elapsed time usually means manual spreadsheets. | Cost per run in USD together with duration mirrors the transparency partners expect from hourly narratives and makes matter budgeting defensible. |

| Depth of execution | ||

| Extended agent runs producing spreadsheets, decks, and documents | Claude creates .xlsx, .docx, .pptx, and PDF files in chat, but outputs reflect generic AI judgment rather than firm templates, and runs aren't oriented around multi-step, multi-reviewer hand-offs. | Agents oriented toward long-running outputs and structured deliverables under org configuration, with cost and duration per run. |

| Governance | ||

| Org-wide admin, monitoring, and access patterns | Enterprise controls exist; operational analytics depend on how you deploy and integrate. | Shared organization model with admin-oriented monitoring for how AI review is used in practice. |

| Continuity | ||

| Artifacts and context structured for the next reviewer | Threads are powerful but not automatically a matter system of record. | Workflow outputs are organized for team pickup inside the org workspace. |

| Ecosystem | ||

| Microsoft 365 integration | Claude for Word (beta, Team and Enterprise plans) ships as a Microsoft add-in; also available inside Microsoft 365 Copilot for eligible customers. | mixus ships its own Word add-in plus email and agent surfaces on top of your existing review habits; redlines, playbooks, and analytics share one organizational workspace. |

Surface area

Word redlines tied to firm playbook rules

Claude (typical)

Claude for Word (beta, April 2026) produces native tracked changes but applies general AI judgment, not firm-specific playbooks or learned rules.

Mixus

Every tracked change cites the playbook rule that triggered it, and reviewer accept/dismiss signals refine rules across the firm.

How work is defined

Shared playbooks and reviewer training signals

Claude (typical)

Standards live in prompts, wikis, and reviewer habit; consistency is manual.

Mixus

Playbooks and feedback loops are part of the product surface, with org-level visibility into how suggestions are accepted or revised over time.

Email & collaboration

Delegated email with thread context, CCs, rich attachments, and replies in-thread

Claude (typical)

Email is not the native control plane; teams re-type context into chat, lose the canonical thread, and juggle versions outside the inbox.

Mixus

Forward or CC the agent on the live thread, attach the file types you already use, and get redlines, models, or other artifacts back in the same conversation so colleagues review together, no copy-paste relay.

Security & compliance

Zero data retention on AI layer; SOC 2 Type II, HIPAA

Claude (typical)

Anthropic states it does not train on Team/Enterprise data. Conversations are stored on Anthropic infrastructure; your compliance team evaluates Anthropic directly.

Mixus

AI calls use Anthropic's zero data retention API. Client data stays in mixus's SOC 2 Type II certified infrastructure with HIPAA attestation. One vendor to evaluate.

Model flexibility (Anthropic, OpenAI, Google)

Claude (typical)

Claude only. Switching providers means switching products entirely.

Mixus

Multiple AI models available; redline pipelines use validated model allowlists. No single-vendor lock-in at the model layer.

Production controls

Configured stops for human approval before the next agent step

Claude (typical)

You can ask the model to wait, but continuation is ultimately conversational. Easy to miss a gate unless someone watches the session.

Mixus

Agent workflows can require explicit human approval signals and halt until recorded, then continue. Sensitive runs match firm escalation discipline.

Cost per run in USD, with duration visibility

Claude (typical)

Billing and usage are often aggregated at the workspace or subscription layer; mapping a single run to cost and elapsed time usually means manual spreadsheets.

Mixus

Cost per run in USD together with duration mirrors the transparency partners expect from hourly narratives and makes matter budgeting defensible.

Depth of execution

Extended agent runs producing spreadsheets, decks, and documents

Claude (typical)

Claude creates .xlsx, .docx, .pptx, and PDF files in chat, but outputs reflect generic AI judgment rather than firm templates, and runs aren't oriented around multi-step, multi-reviewer hand-offs.

Mixus

Agents oriented toward long-running outputs and structured deliverables under org configuration, with cost and duration per run.

Governance

Org-wide admin, monitoring, and access patterns

Claude (typical)

Enterprise controls exist; operational analytics depend on how you deploy and integrate.

Mixus

Shared organization model with admin-oriented monitoring for how AI review is used in practice.

Continuity

Artifacts and context structured for the next reviewer

Claude (typical)

Threads are powerful but not automatically a matter system of record.

Mixus

Workflow outputs are organized for team pickup inside the org workspace.

Ecosystem

Microsoft 365 integration

Claude (typical)

Claude for Word (beta, Team and Enterprise plans) ships as a Microsoft add-in; also available inside Microsoft 365 Copilot for eligible customers.

Mixus

mixus ships its own Word add-in plus email and agent surfaces on top of your existing review habits; redlines, playbooks, and analytics share one organizational workspace.

Primary sources

- Anthropic — Claude for Word (beta, launched April 10, 2026)Retrieved 2026-04-16

- Anthropic — Use Claude for Word (help center)Retrieved 2026-04-16

- Anthropic — Create and edit files in ClaudeRetrieved 2026-04-16

- Anthropic — Claude in Microsoft 365 CopilotRetrieved 2026-04-16

Competitive products change frequently. Treat this page as a point-in-time snapshot; confirm critical details before external distribution.

See mixus on your own contracts.

30 minutes. Your playbook, your template, your team setup.

Book a demo